Human language as a model

David Schlangen has been Professor of the “Foundations of Computational Linguistics” at the University of Potsdam for three years. But he is not entering new territory here. At the beginning of the millennium, he already did research in Potsdam for many years as a postdoc and, starting in 2006, led the Emmy Noether Group “Incrementality and Projection in Dialogue Processing”. From 2010, he was Professor of Applied Computational Linguistics in Bielefeld, where he was involved in the Cluster of Excellence “Cognitive Interaction Technology”. “I came back because of the very good institute, the highly qualified colleagues, and the opportunities this position offers me,” he says. “In addition, the Cognitive Systems Master’s degree program is excellent and brings a steady stream of very good students to Potsdam.”

Schlangen also studied computer science and philosophy in addition to his current domain. He came to computational linguistics via a small detour. “In Bonn, I first studied physics for two or three semesters, already with an interest in philosophy. But at least in my basic studies, this interest wasn’t satisfied.” A school friend then told him about the subject of computational linguistics, which he didn’t know at the time. “It was still a bit exotic in the 1990s when I was studying.” So he began this course of studies together with his friend in Bonn. Schlangen can still build a bridge to philosophy today because both the philosophy of language and computational linguistics deal with the creation of meaning in interaction. Twenty years ago, however, hardly anyone in computational linguistics was doing research on dialogue systems, according to Schlangen. Today, so-called natural language processing, and dialogue research as part of it, is well-established as a central field of artificial intelligence. But not all researchers have a background in linguistics. “Many in the field think that they, as a computer scientist, don’t need to know much about the domain in which the machine is supposed to learn,” Schlangen says. “I naturally see this differently. From my point of view, it’s important to be as close as possible to language processing, the way it happens in humans.”

In the Emmy Noether Group, Schlangen and his team worked on dialogue systems that process utterances even before they are complete. “Speech systems usually listen until you are quiet and only then start processing what was said.” We humans often find this behavior “unnatural” or disturbing. “After all, we already understand a statement, at least partially, while our counterpart is still speaking and can react to it,” he says. That’s why we constantly give so-called feedback signals: we nod or say “mhm” to show that we are following. “A listener influences the speaker through his or her continuous feedback, and a good speaker constantly responds to the listener’s signals.” These sometimes subtle signs are what interest Schlangen in conversation research.

When computers tell us what to do

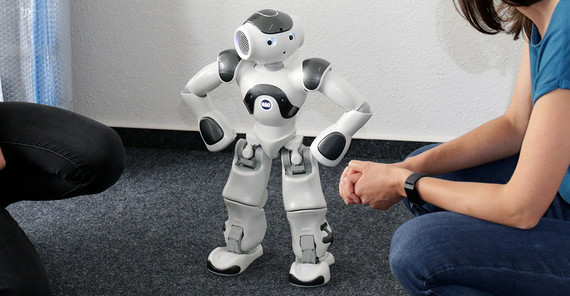

Prof. Schlangen and his team focus on language, but the researchers also take into consideration the human body and its physical environment. “Our goal is that artificial systems are situated in time in the same way that human conversation partners would be,” Schlangen says. “We want to get to the point where an interaction with agents is more like a face-to-face conversation than a chat conversation.” That is why the Potsdam computational linguists like to use robots that have a body or at least a head – such as Furhat, which is available for studies in the computational linguistics lab at the University of Potsdam. In the RECOLAGE project, Furhat gives instructions to humans - in Pentomino. Schlangen has been using the game, which is reminiscent of the classic Tetris, since his postdoc days. “It lends itself to being a simplified domain that offers certain degrees of freedom but at the same time a relatively large amount of control.” As the instruction is given, the robot can see from the human’s reactions whether it has been understood correctly. It might someday be possible, for example, for a computer system to help us assemble a piece of furniture according to Ikea instructions, also partly thanks to another area of artificial intelligence – image processing.

“Take that board over there and the little silver screws”, such a computer system might say to us. With the help of a tablet, the system would not only record what we say, but also watch us via a camera and help us to assemble the various components around us. If we reach for the wrong part, it should correct us immediately. “No, not the brown one, but the green one next to it.” “The linguistic correction results from the respective context, which is what makes it so complicated,” says Schlangen. “Because it’s about milliseconds here.” If, for example, the computer system observes that the human’s hand is moving in the wrong direction, it should react while the human is still doing so – in real time and not only when the screw has landed in the wrong board. That’s why the computer has to anticipate where the human is going to reach. This can also go wrong if the robot predicts a movement that we didn’t intend to do. “But something like that can also lead to disturbances between two people who know each other well,” says Schlangen and laughs. In order to interpret and implement the system’s instructions easily, the common basis of understanding between machine and human must be negotiated. Which technical terms should the computer explain, which can it assume to be known? After all, if the human counterpart does not understand the instructions or does not feel properly understood, he or she will find the communication exhausting. Then the system would no longer be a real support.

Even if the question of application is not part of the research project, the results of RECOLAGE are conceivable for personal assistants in every respect. It is also thinkable that an artificial agent will guide us through a recipe while cooking. “Amazon is currently working on the development of such a system,” the researcher tells us. Computer scientists in business often deal with problems similar to those of Schlangen and his team. “There is an extreme amount of money going around in my field. Large companies operate research departments and also publish.” In Potsdam, however, basic research is carried out; it’s not about product development here. “In this respect, there is largely friendly competition between us and the private industry.”

Simulating human behavior with computer models

Machine learning is an essential element of Schlangen’s research. The point is to generate knowledge from experience: The computer system receives learning data, from which it derives rules and which it can, ideally, apply to new situations. However, the computational linguist is to some extent critical of deep learning models – a specific method of machine learning in which artificial neural networks are supposed to facilitate deeper learning. “I come from a slightly more traditional approach, where it is common to start theoretically. We want to develop theories that explain something, not just ‘do’ something, as is the case with deep learning models.” Such models can look very convincing when it comes to a short chat conversation, for example. “But when we’re dealing with an embodied computer system, a robot in human form, there’s a lot that needs to be controlled simultaneously – not just speech but also facial expressions and gestures, all in a short time.” After all, the robot can’t maintain one facial expression for ten seconds until the next one comes because that would again already be interpreted by the human. Because deep learning models have not learned from situations in real time but from “dead” data, they are, in Schlangen’s view, only suitable to a limited extent for processing information under time pressure and reacting accordingly. “In order for the behavior of the artificial agents to fit the situation, we need more computer models that have to be extremely dynamic.”

That is why the researcher is working with cognitive scientists of the University of Potsdam who model cognitive processes. “We use different models from our colleagues who work very precisely on smaller phenomena, such as predicting eye movements. Our task is to put these theories together and, in this way, develop language systems that simulate human behavior.” The researchers also plan to use robots for psychological or linguistic experiments, which is a rather unusual approach so far but one for which there are good arguments. “They are very well suited as a controlled counterpart. A robot can mercilessly produce stimuli that are exactly identical for every subject.”

When computer systems seemingly become more and more like us, the question arises how they differ from us at all. In his research, Schlangen is often confronted with the phenomenon that precisely what is easy for us humans is difficult for computers (and vice versa - just think of demanding arithmetic tasks). Small talk, for example, is an uncomplicated everyday activity for humans, but it poses challenges for computer systems. They regularly make mistakes. “That’s not so problematic. It happens to us, too. But unlike computers, we are very good at correcting ourselves.” For the scientist, it is therefore clear that computer systems cannot perfectly simulate us humans. On the contrary, it should always be clear that they are artificial agents. “But they should be understandable and react appropriately to the situation, behave ‘naturally’.” But why is it apparently so difficult to achieve ‘natural’ behavior in artificial agents? “Perhaps they lack something fundamental,” Schlangen suggests. “They are fed text, whereas the newborn is encouraged and challenged in interactions from the very first moment. Interaction is fundamental to us humans and is one of our innate learning mechanisms. We do not learn in passive observational situations but want to understand and communicate. In my view, it is essential for our human interaction that we understand each other and our counterparts as intentional agents.” Schlangen therefore does not believe that computer systems will soon be able to communicate naturally, or perhaps even intentionally, with the help of the current methods. “On the other hand, ten years ago, I would not have thought possible what artificial intelligence can do today.”

The Project

RECOLAGE: Real-Time Vision-Grounded Collaborative Language Generation

Funding: German Research Foundation (DFG)

Duration: Dec. 2019–Nov. 2023

Principal investigator: Prof. Dr. David Schlangen

The Researcher

Prof. Dr. David Schlangen studied computational linguistics, computer science, and philosophy. Since 2019, he has been Professor of the “Foundations of Computational Linguistics” at the University of Potsdam.

E-Mail: david.schlangenuuni-potsdampde

This text was published in the university magazine Portal Wissen - One 2023 „Learning“ (PDF).