Not only people but also computers learn. Character and spam recognition are examples of how computer programs automatically learn to make predictions. Learning theory deals with the mathematical analysis of properties of such methods and is closely linked to statistics. Gilles Blanchard, Professor of Mathematical Statistics, researches in this field.

A machine, an artificial system, learns from examples and then generalizes with the help of mathematical models. By analyzing individual examples, the system “recognizes” regularities to evaluate unknown data. This is used for automated diagnostic methods, detecting credit card fraud, stock market analyses, classifying DNA sequences, speech and handwriting recognition.

Large quantities of data, pictures or texts are processed in machine learning. When different people write the digit 2, they produce a corresponding number of “individual twos”. The databases that have been generated in this way provide the foundation for learning and prediction programs. For example, the machine learns to recognize automatically which digits have been written in letters. “The program is fed with examples, compares pictures and recognizes similarities. These examples are used to create a classification,” Blanchard describes the procedure. It helps to identify addresses on postal items during automatic sorting as well as licence plates. It is not surprising that the machine can learn to recognize printed text much more easily than handwritten. Speech recognition is extremely useful, as with automatic translations, a classical example of machine learning. They are not perfect but do provide a basic structure. You can even recognize faces in this way.

Methods of machine learning are being used for many applications in bioinformatics: physicians use a lot of data, like information from magnet resonance tomography, computer tomography and genetic data. Machines are helpful assistants in these fields, for example, in detecting diseases like breast cancer.

As with human beings, the learning process for machines is complicated and strenuous because it always has to capture many variations. In order to invent new architectures and methods, analogies are often drawn to the human brain during programming. “It is extremely difficult to establish logical rules because you cannot always identify the logic of nature,” Blanchard says. The many random variations and errors make mathematical tools of probability theory suitable for analysis.

Informatics, probability theory and statistics are inextricably linked in machine learning. Gilles Blanchard’s scientific career illustrates the connections and development of these fields. He studied mathematics in Paris and completed his PhD there. In 2002, the scientist started working at the Fraunhofer Institute for Computer Architecture and Software Technology in Berlin, where he was mainly engaged in machine learning. Since 2009 Blanchard has been working in the statistics group at the Weierstrass Institute for Applied Analysis and Stochastics in Berlin. He became Professor of Mathematical Statistics at the Institute of Mathematics of the University of Potsdam in 2010. For him, the appeal lies in working at the intersection of these interacting scientific fields. This is why the mathematician closely collaborates with the computer scientist Tobias Scheffer, Professor of Machine Learning at the Department of Computer Science.

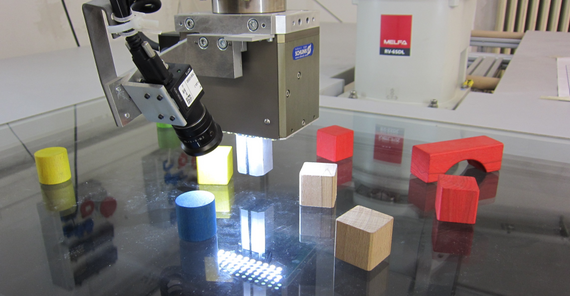

Blanchard and his colleagues were working on the project MASH (Massive Sets of Heuristics) for three years. This was an EU-funded project to develop a common platform for collaborative machine learning. In addition to the University of Potsdam, four partner institutions from Switzerland, France, and the Czech Republic were taking part. They wanted to establish a learning system in collaboration with big groups of contributors from various branches and with different backgrounds. “The basic idea of the project is to use the expertise of many individuals and to combine the programs they developed to extract features,” says PhD student Andre Beinrucker. Different handwritings and perspectives create many parts of programs to ultimately form one large system.

Applying learning methods that have been developed in the project, a robot arm is learning “of its own accord”, first by trial and error, to accomplish simple tasks, like separating a red cube from other shapes. All parties involved in the project deliver “small” pieces of information and programs. “The point is not to write a complete program that solves everything. Each piece of information is important, and this is why we work collaboratively,” Beinrucker says.

The Project

Massive Sets of Heuristics (MASH)

Funded by: European Union

Duration: 2011 – 2013

The Scientist

Professor Gilles Blanchard studied mathematics in Paris. Since 2010 he has been Professor of Mathematical Statistics at the University of Potsdam.

Contact

Universität Potsdam

Institut für Mathematik

Am Neuen Palais 10,

14469 Potsdam

E-Mail: gilles.blanchardumath.uni-potsdampde

Text: Dr. Barbara Eckardt, Online-Editing: Agnes Bressa, Translation: Susanne Voigt